Neural networks are at the core of modern artificial intelligence, powering everything from voice assistants to self-driving cars. But how do these systems actually learn? Unlike traditional programming, neural networks improve their performance by analyzing data and adjusting internal parameters. This process allows them to recognize patterns, make predictions, and solve complex problems. Understanding this concept reveals how machines can learn from experience, much like humans do.

What Is a Neural Network?

A neural network is a computational model inspired by the human brain. It consists of layers of interconnected nodes, often called “neurons.”

These networks:

- Receive input data

- Process it through multiple layers

- Produce an output or prediction

Each connection has a weight that determines how strongly signals are passed.

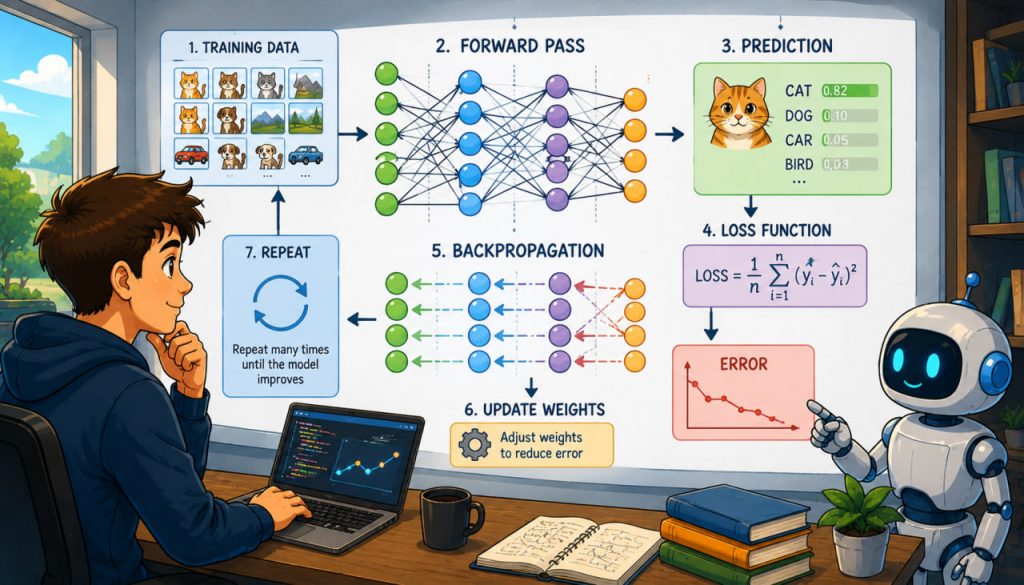

The Learning Process

Neural networks learn through a process called training.

During training:

- The model is given data

- It makes predictions

- Errors are calculated

- The system adjusts itself to improve

This cycle repeats many times, gradually improving accuracy.

What Is Training Data?

Training data is the foundation of learning.

It includes:

- Input examples (images, text, numbers)

- Correct outputs (labels or answers)

For example:

- Image → “cat”

- Text → sentiment classification

The quality of data directly affects the performance of the model.

Forward Pass: Making a Prediction

In the first step, data moves through the network.

This is called the forward pass.

During this stage:

- Input data passes through layers

- Each neuron applies a mathematical function

- A prediction is produced

Loss Function: Measuring Error

After making a prediction, the network compares it to the correct answer.

This difference is measured using a loss function.

The loss function:

- Calculates how wrong the prediction is

- Provides a numerical value for error

The goal is to minimize this error.

Backpropagation: Learning from Mistakes

The key learning step is called backpropagation.

In this process:

- The error is sent backward through the network

- Each connection is adjusted slightly

- The system “learns” which changes improve performance

This is repeated thousands or millions of times.

Gradient Descent: Optimizing the Model

To update the network, a method called gradient descent is used.

It works by:

- Finding the direction that reduces error

- Adjusting weights step by step

Over time, the model becomes more accurate.

Types of Learning

Neural networks can learn in different ways.

Supervised Learning

- Uses labeled data

- Most common approach

Unsupervised Learning

- Finds patterns without labels

Reinforcement Learning

- Learns through rewards and penalties

Each method is used for different tasks.

Role of Activation Functions

Activation functions determine whether a neuron should activate.

They:

- Add non-linearity to the model

- Allow the network to learn complex patterns

Without them, neural networks would be limited in capability.

Expert Insight

AI researcher Geoffrey Hinton, often called the “father of deep learning,” has said:

“Neural networks learn by adjusting connections between units, much like the brain strengthens or weakens synapses.”

This highlights the similarity between artificial and biological learning.

Challenges in Training Neural Networks

Training is not always simple.

Common challenges include:

- Overfitting (memorizing instead of learning)

- Large data requirements

- High computational cost

- Bias in data

Careful design and testing are essential.

Why Neural Networks Are Powerful

Neural networks are effective because they can:

- Detect complex patterns

- Adapt to new data

- Handle large datasets

They are used in:

- Image recognition

- Natural language processing

- Medical diagnosis

Why This Matters

Neural networks are shaping the future of technology.

They influence:

- Automation

- Decision-making

- Scientific research

Understanding how they learn helps us use them responsibly and effectively.

Interesting Facts

- Neural networks can contain millions or billions of parameters.

- Training can take hours or even weeks.

- They improve with more data.

- Deep learning uses many hidden layers.

- Neural networks are used in everyday apps.

Glossary

- Neural Network — A system of connected nodes that processes data.

- Training Data — Data used to teach a model.

- Backpropagation — Method of adjusting weights using error.

- Gradient Descent — Optimization technique for minimizing error.

- Activation Function — A function that determines neuron output.